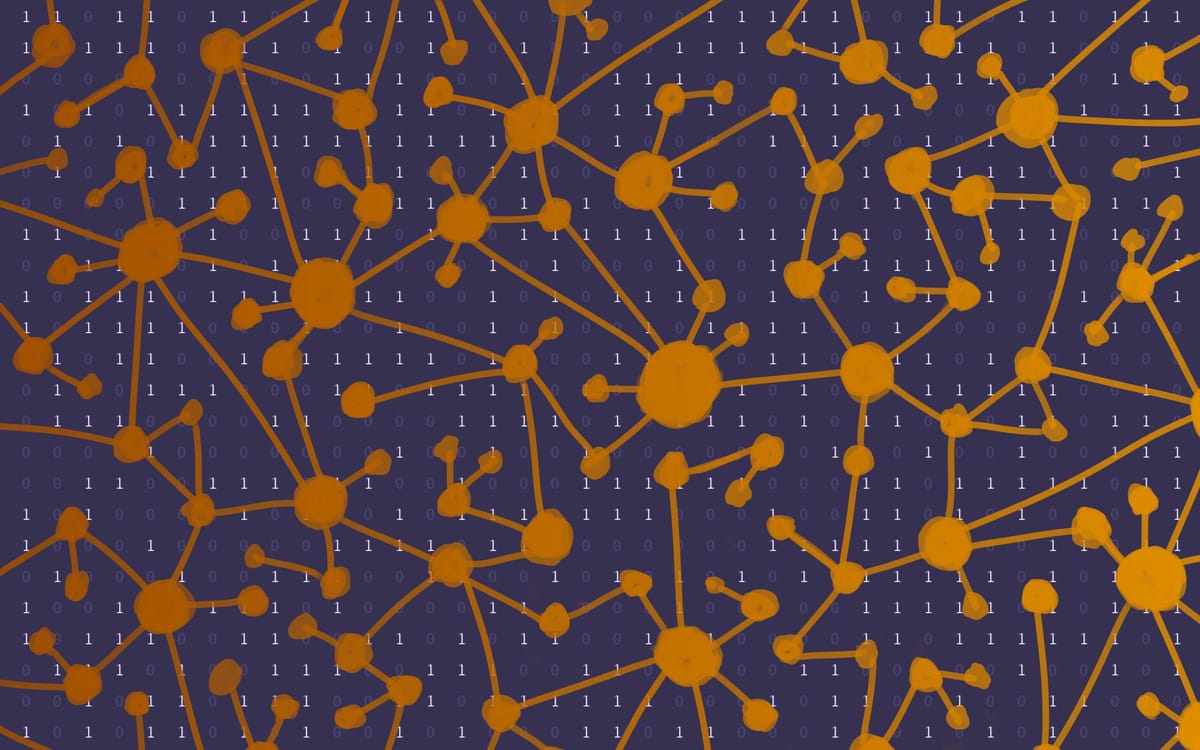

Networks are math squares disguised as drawings

This is why neural networks are linear algebra under the hood

I have a confession to make: I was really, really bad with vectors and matrices in school.

It's a long story how I went from loving math as a kid to kind of hating it in college. But a key episode in that saga was when my 9th grade algebra teacher realized the "normal kid" math track my mom had signed me up for meant I wasn't going to hit calculus before graduating unless I skipped a chunk of math mid-year to jump into the "nerdy kid" track. The chunk I skipped was vectors, and I continued to get cold sweats any time I saw the dreaded [ ] brackets for years. Despite eventually developing a decent intuitive grasp on what matrices and vectors represented, I was hopeless at doing anything with them — to survive college physics, I teamed up with a math major who was equally hopeless at translating physics problems into math, but was an equation-solving machine.

Now, as a science journalist liberated from the need to actually do math, I can finally appreciate it. That includes my old enemy: matrices, the math squares of doom. And today I want to share something amazing about them.

Any network — think maps of social connections, routes between cities, links between internet pages, anything really — can be written as a matrix. (So long as it isn't infinite)

Translation: a meshwork of nodes connected by edges is really just a grid of numbers in disguise. And if that's not cool enough for you, there's an AI angle. The fact that networks are also math squares is why neural networks are basically linear algebra under the hood.