Who gets the job? Here's how computers could help catch unfair rankings

I am weirdly hopeful that it could be easier to notice biased AI than biased humans?

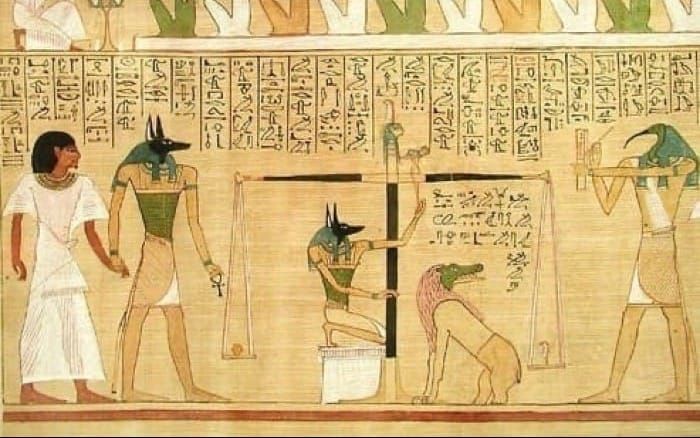

Jobs. University admissions. Grants. We apply for a lot of stuff these days. It used to be that Joe from Human Resources would pull out the dreaded scales of Anubis to weigh your heart against a feather to determine whether your soul should be eaten by a primordial crocodile goddess — er, I mean, rank your application. But more and more, Joe is letting bots do the ranking for him.

Maybe this is a good thing: Joe is just a dude, and honestly he's a little racist. Maybe AI can be fairer. Although... AI also consumed the entire internet to come into being, and the internet is also pretty racist.

So, how can we tell when an algorithm is fair? And what does being fair even mean?

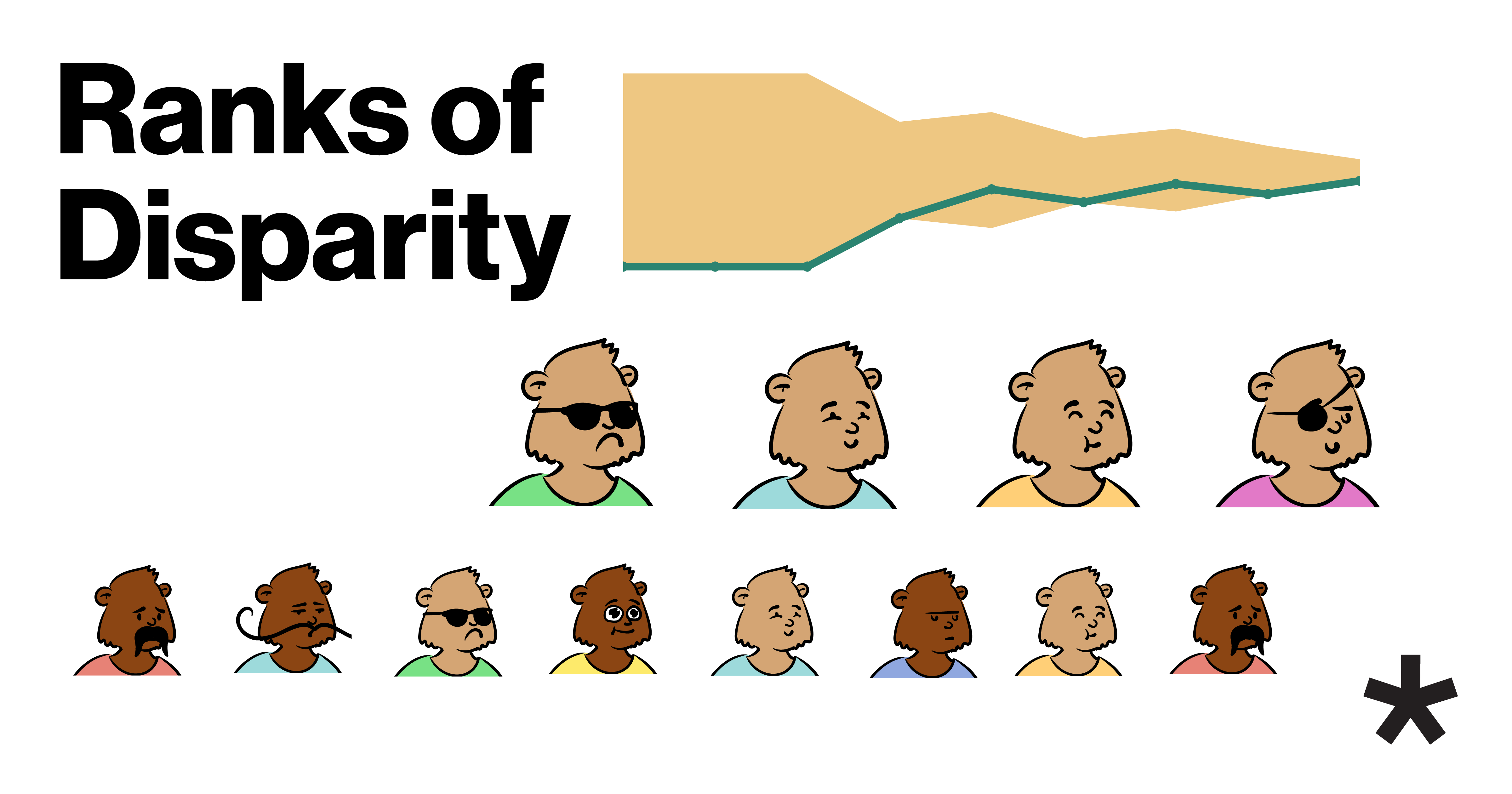

This week's TLDR is a little different. Instead of a study that came out last week, I wanted to highlight this amazing interactive website that was made to visually explain a new way to measure the fairness of ranking algorithms. The method, called hyperFA*IR, was presented last year at the ACM Conference on Fairness, Accountability, and Transparency. It highlights how failing to account for how the pool of remaining applicants changes as you make selections can make some algorithms seem fairer than they really are.

What happened?

In a nutshell, hyperFA*IR is a new way to rank rankings on fairness.

Most approaches to evaluating whether or not an algorithm is fair assumes that each candidate is considered independently — so if a candidate pool is 70% men and 30% women, you'd naively assume that the odds of any one woman being selected from the pool for any slot in the ranking are 3:7.

But that's not how real rankings work. When you're ranking people, your candidate pool doesn't re-fill after you pull someone out to rank them. So if 10 men and 5 women applied for a position and you pick men for the top 5 slots, the remaining pool of candidates for slot #6 would be 5 men and 5 women — it gets more and more suspicious that you've got some bias against picking women as men drain from the pool. hyperFA*IR accounts for this.

Who did it?

The study comes from Fariba Karimi's team at the Complexity Science Hub in Vienna, which is focused on understanding how algorithms can worsen or increase fairness. It was led by graduate student Mauritz Cartier van Dissel, who is an applied mathematician. The interactive visualization was made by Liuhuaying Yang and Diego Baptista Theuerkauf.

Why it matters

What does fair mean?

That question is the reason I think this study matters – not because it solved fairness once and for all (if that's even possible), but because it is a good reminder of how seemingly straightforward concepts like "fair" don't mean just one thing. This study provides a tool for evaluating how fair ranking algorithms are according to a very specific understanding of fairness that's something like this:

First, assume that talent (or whatever quality you're ranking) is distributed evenly among groups. The minority isn't less talented than the majority. However, the method we use to score candidates' talent might not be fair. As a trivial example, maybe the hiring manager scoring the interviews is a raging sexist.

"The underlying assumption is basically that both groups should have the same capable people. But then you have different aspects that bias the ranking itself," says study author Mauritz Cartier van Dissel.

Once you make that assumption of equal talent, one way of measuring fairness is to see how closely the results align to what you'd expect if ranking wasn't biased — i.e., was random. If your applicant pool was 70% majority, 30% minority, then it's not statistically weird for the top candidate to be from the majority. It's even feasible that the top three candidates would, just by chance, be from the majority. But what about the top 4? Top 5? At a certain point, underrepresentation starts feeling fishy. hyperFA*IR provides a way to determine if a ranking algorithm's results have exceeded that threshold of fishiness (which you can set to be stricter or more permissive).

You might not agree with that understanding of fairness. That's fine. But it is, at least, transparent. When we code fairness into a computer, we're forced to get specific about what we mean by fair. I think that is a good thing. There's probably no ranking algorithm or algorithm for evaluating fairness (like the one from this study) that everyone will like. In fact, several definitions of fairness disagree, van Dissel told me.

But unlike the inscrutable values floating around inside the heads of decision-makers, algorithms can be published and subjected to public debate and scrutiny. At least in theory.

Look. You and I both know that if I were a barista, you'd 100% be leaving a tip right now. The social pressure to drop a coin in that jar would just be overwhelming. But you're presumably reading this thing alone on a universe-rectangle, so I can't rely on my mere presence to remind you to be nice.

If you made it this far I assume you're enjoying the article. So maybe drop a coin in my digital tip jar. It's just €2 per month (€0.25 per post!) — a cheap and fully automated weekly warm fuzzy feeling for the digital age.

Thanks for reading

There are many ways you can help:

- Subscribe, if you haven't already!

- Share this post on Bluesky, Twitter/X, LinkedIn, Facebook, or wherever else you hang out online.

- Become a patron for the price of 1 cappuccino per month

- Drop a few bucks in my tip jar

- Send recommendations for research to feature in my monthly paper roundups to elise@reviewertoo.com with the subject line "Paper Roundup Recommendation"

- Tell me about your research for a Q&A post (email enquiries to elise@reviewertoo.com)

- Follow me on Bluesky

- Spread the word!